Mastering Transfer Learning: A Guide for Limited Datasets

Transfer learning has revolutionized the field of machine learning by enabling models to learn from pre-trained weights and fine-tune them on smaller datasets. This technique is particularly useful when dealing with limited datasets, as it allows developers to leverage the knowledge gained from larger datasets and adapt it to their specific task. In this article, we will delve into the world of transfer learning, exploring its benefits, limitations, and practical applications.

What is Transfer Learning?

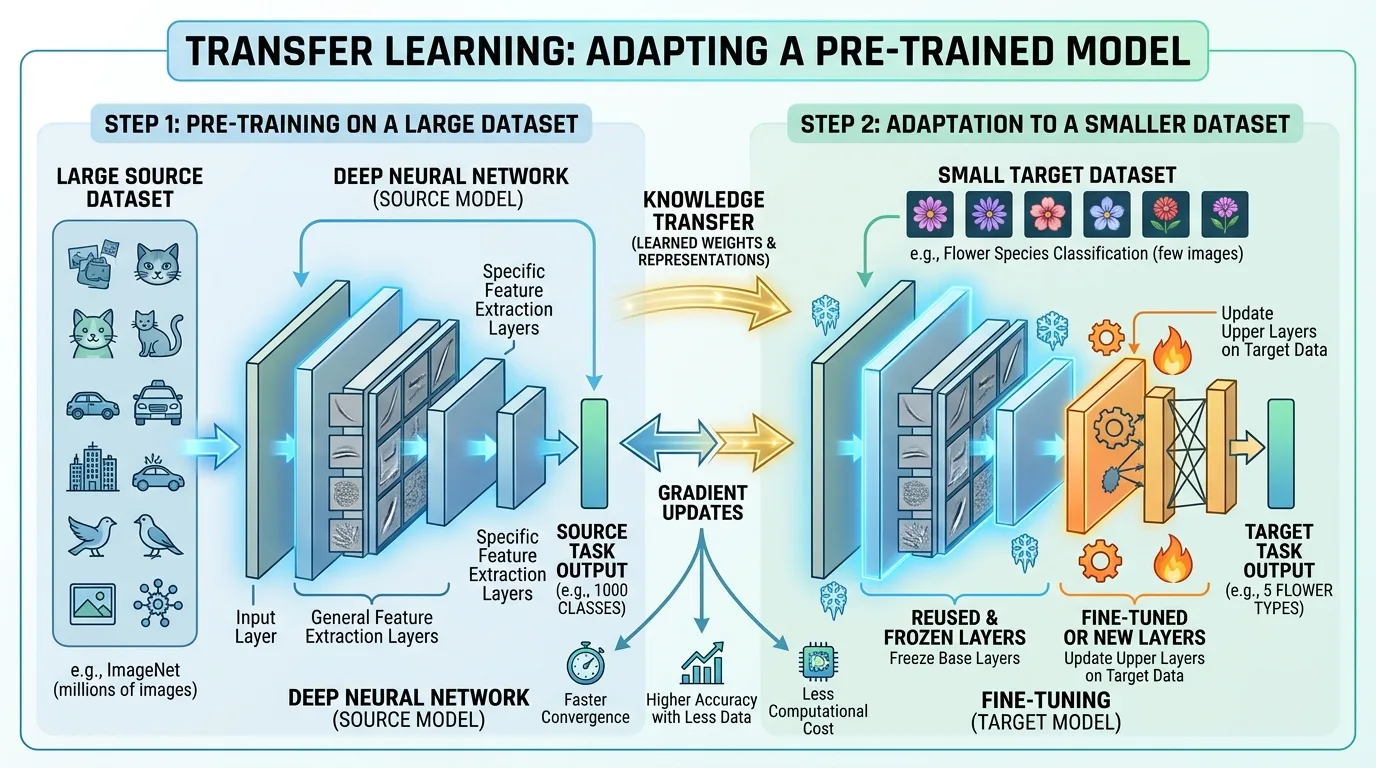

Transfer learning involves taking a pre-trained model and adapting it for use on a new, but related task. This approach has been shown to significantly improve model performance, especially when working with limited datasets. By leveraging pre-trained models, developers can tap into the collective knowledge of the machine learning community, avoiding the need to train models from scratch.

The concept of transfer learning is built upon the idea that knowledge learned in one domain or task can be applied to another related domain or task. This is based on the premise that certain features or patterns are generalizable across tasks and datasets. In essence, pre-trained models have already learned to recognize these patterns, which can then be fine-tuned for specific tasks.

Benefits of Transfer Learning

Transfer learning offers several benefits that make it an attractive solution for developers and data enthusiasts:

Reduced Training Time

By using pre-trained weights, you can skip the time-consuming process of training a model from scratch. This is particularly useful when dealing with limited datasets, as it allows developers to quickly adapt existing knowledge to their specific task.

Improved Model Performance

Pre-trained models have already learned to recognize patterns in large datasets, which can lead to improved performance on related tasks. By leveraging this knowledge, developers can achieve better results than training a model from scratch, even with a small dataset.

Increased Efficiency

Transfer learning enables developers to adapt existing models to new tasks, reducing the need for extensive hyperparameter tuning. This is achieved by fine-tuning pre-trained models, which requires less computational resources and time compared to training a model from scratch.

Limitations of Transfer Learning

While transfer learning offers many benefits, it also has some limitations:

Relatedness of Tasks

The success of transfer learning relies heavily on the similarity between the pre-trained model's task and the target task. If the tasks are too dissimilar, the pre-trained weights may not be effective.

Fine-Tuning Requirements

Transfer learning requires fine-tuning the pre-trained model to adapt it to the new task, which can be a time-consuming process. This step is crucial in transfer learning, as it allows developers to adapt existing knowledge to their specific task.

Applying Transfer Learning in Practice

To apply transfer learning effectively, follow these steps:

Step 1: Choose a Suitable Pre-Trained Model

Select a model that has been trained on a related task or dataset. This can be achieved by searching online repositories or using pre-trained models provided by popular deep learning frameworks.

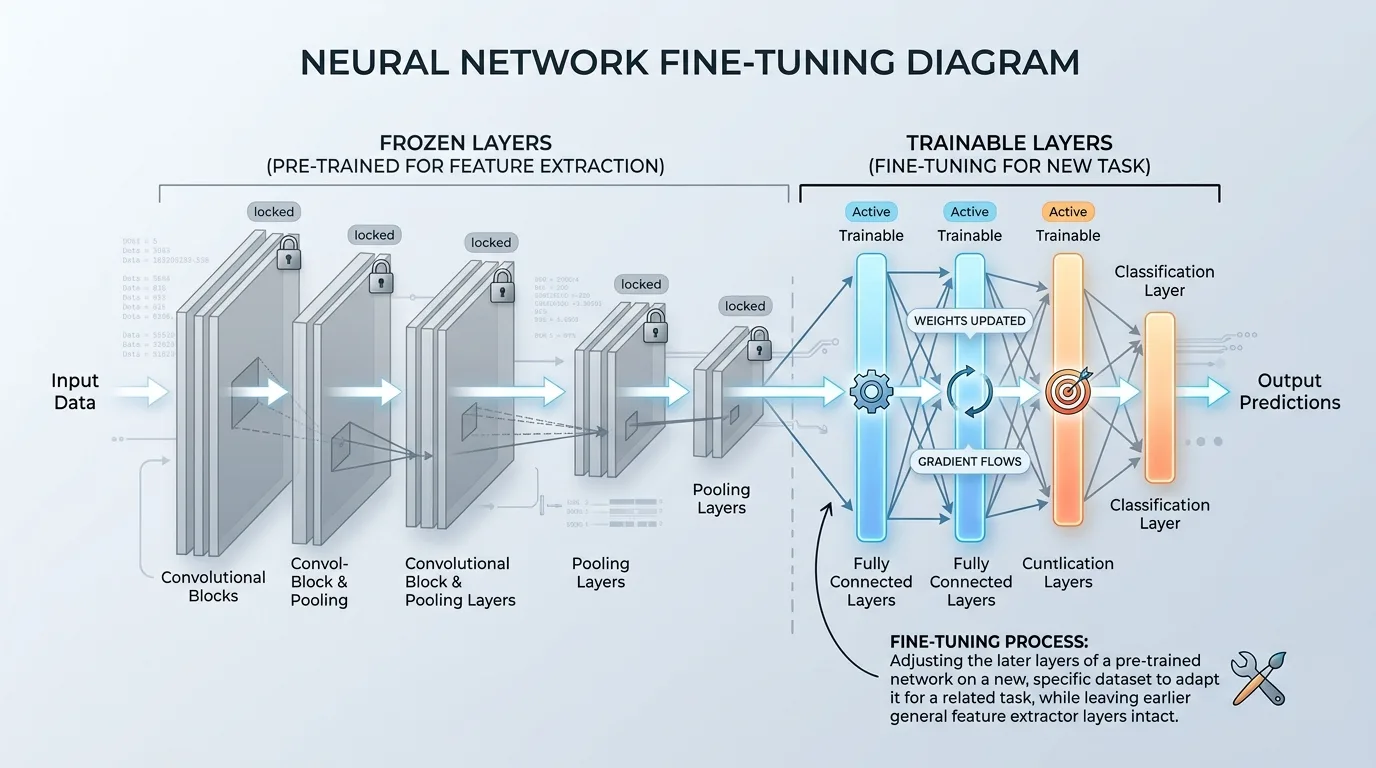

Step 2: Freeze Some Layers

Freeze the early layers of the pre-trained model to prevent overwriting important features learned during pre-training. This is done to preserve the knowledge gained from larger datasets and adapt it to the new task.

Step 3: Fine-Tune Remaining Layers

Fine-tune the remaining layers to adapt the model to your specific task. This step requires careful tuning of hyperparameters, such as learning rate and batch size, to optimize the fine-tuning process.

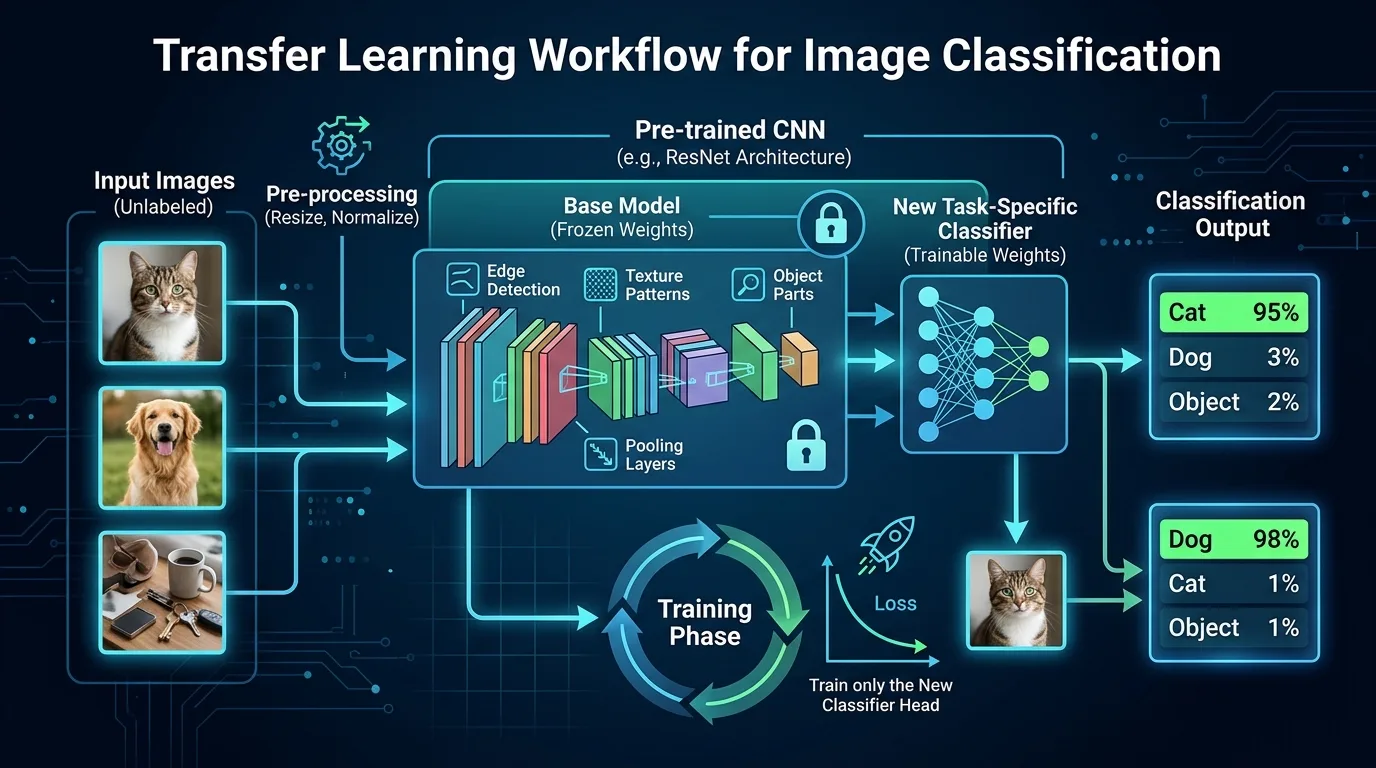

Example Use Case: Image Classification

Suppose you want to classify images into different categories, but you only have a limited dataset of images. You can use a pre-trained convolutional neural network (CNN) like VGG16 or ResNet50, which has been trained on the ImageNet dataset. By fine-tuning these models on your specific task, you can achieve state-of-the-art performance with significantly fewer training examples.

Tips for Effective Transfer Learning

Monitor Performance

Regularly monitor the model's performance during fine-tuning to ensure it is adapting correctly. This can be achieved by tracking metrics such as accuracy and loss over time.

Tune Hyperparameters

Adjust hyperparameters, such as learning rate and batch size, to optimize the fine-tuning process. This step requires careful tuning of parameters to achieve optimal results.

Experiment with Different Models

Try different pre-trained models or architectures to find the best fit for your task. This can be achieved by experimenting with various models and evaluating their performance on a validation set.

Overcoming Limited Dataset Challenges

Transfer learning is particularly useful when dealing with limited datasets. By leveraging pre-trained weights, you can:

Improve Model Performance

Even with a small dataset, transfer learning can help improve model performance by adapting existing knowledge.

Increase Data Efficiency

Transfer learning enables you to get more out of your limited data, making it an attractive solution for resource-constrained projects.

Conclusion

Transfer learning is a powerful technique that has revolutionized the field of machine learning. By leveraging pre-trained models and fine-tuning them on smaller datasets, developers can achieve improved performance with reduced training time. While transfer learning offers many benefits, it also has limitations that must be considered. With careful application and experimentation, you can unlock the full potential of transfer learning and overcome challenges posed by limited datasets.